Artificial intelligence has transcended its role as a mere ‘supporting technology’ operating in the background of financial systems. It has evolved into an active agent that directly makes decisions, takes action, and shapes the customer experience. Particularly in high-volume and high-risk domains such as card payment systems, the interpretability of decisions made by artificial intelligence models has become just as critical as their accuracy. This is precisely where the concept of Explainable Artificial Intelligence (XAI) comes into play. In this article, which examines the rapidly expanding applications of artificial intelligence across the technology and business landscape, we approach the subject not from the perspective of outcomes, but from that of a fundamental need. As noted at the outset, it is no longer sufficient for artificial intelligence systems to merely produce correct decisions — the manner in which and the reasons why these decisions are made must also be comprehensible. Within this framework, the first section of the article will examine what the concept of Explainable Artificial Intelligence (XAI) entails and explore why traditional approaches, commonly referred to as ‘black box’ models, have begun to be called into question. Subsequently, we will investigate why interpretability has become critical for high-risk domains such as card payment systems, what problems it addresses, and how this approach is transforming financial decision-making mechanisms. In the concluding sections, we will address the concrete use cases of XAI, its representation in the academic literature, and why, from a regulatory perspective, it has become not a preference but an obligation.

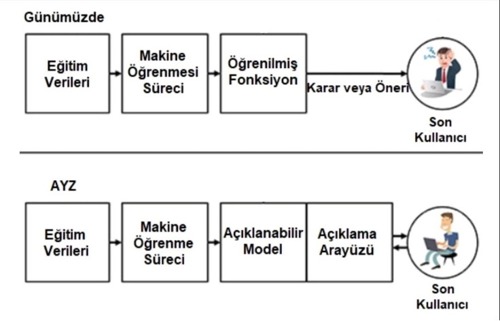

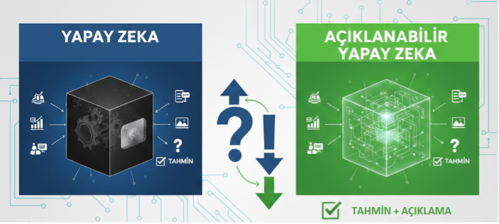

Figure 1: Artificial Intelligence and Explainable Artificial Intelligence

Why Explainability?

Many modern artificial intelligence models employed in the financial sector — particularly those based on deep learning — operate with considerable predictive success; yet their decision-making mechanisms frequently function as a black box. The model produces accurate predictions. However, the reasoning underlying those predictions remains opaque.

In the context of card payment systems, this opacity gives rise to critical questions:

- Why was a transaction flagged as fraudulent?

- Why was a customer’s credit card limit reduced?

- Why was a specific payment declined?

When satisfactory answers to these questions cannot be provided, the consequences extend beyond customer dissatisfaction; operational trust, internal audit processes, and regulatory compliance are equally compromised. XAI addresses precisely this gap.

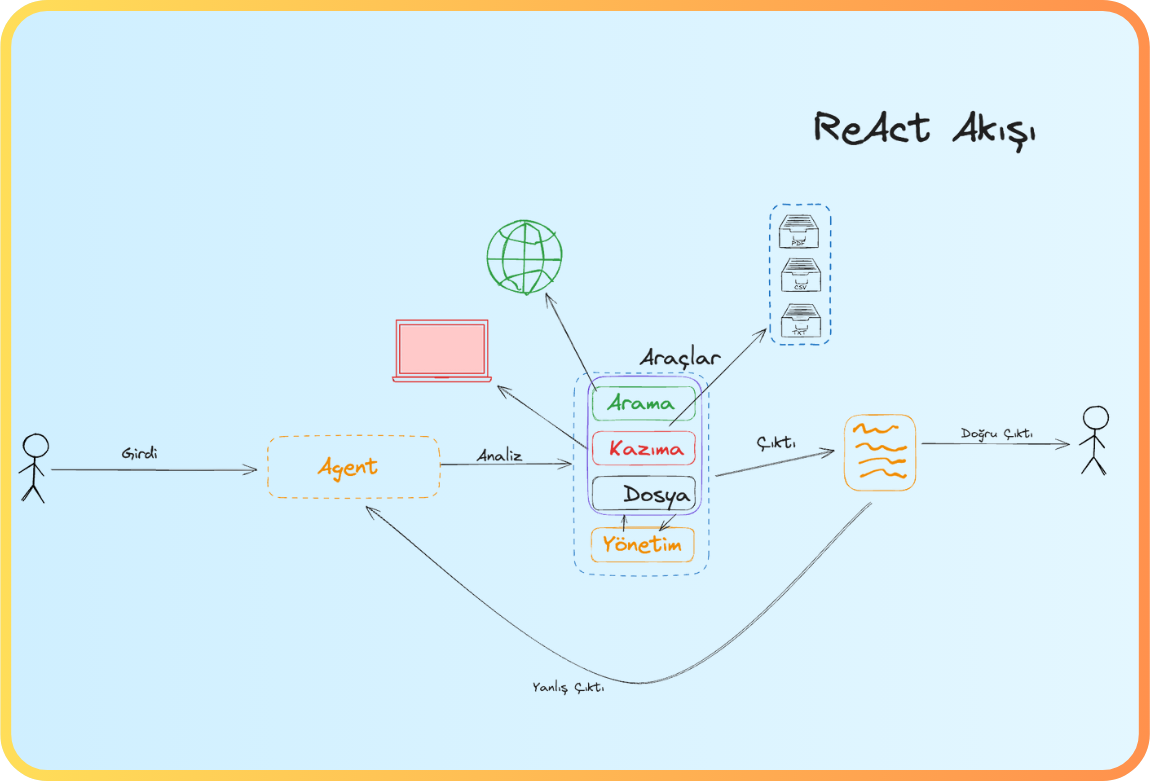

Figure 2: Black Box Model – Explainable Model

What is XAI? A Concise Definition

Explainable Artificial Intelligence refers to the collection of methods and approaches that enable an artificial intelligence model to justify its decisions in terms that are comprehensible to human users. The objective is not to conceal the mathematical complexity underlying the model, but rather to render that complexity interpretable. In other words, XAI shifts the focus from the question of ‘What did the model do?’ to the more fundamental question of ‘Why did the model do it?’

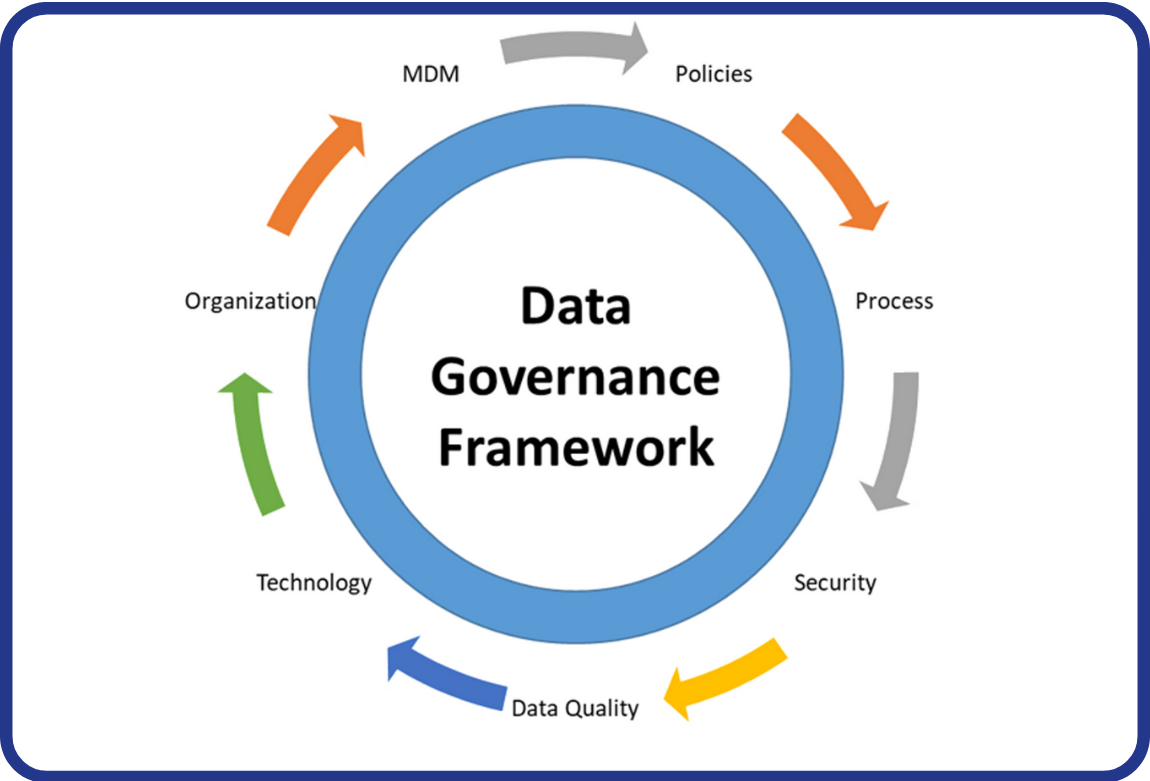

Figure 3: Explainable Artificial Intelligence Framework

Where Does XAI Stand in Card Payment Systems?

Card payment systems are high-throughput environments processing thousands of transactions per second, characterized by low error tolerance and substantial regulatory pressure. Within this context, artificial intelligence is commonly deployed in the following domains:

- Fraud detection

- Risk and behavioral scoring

- Credit card limit determination and adjustment

- Detection of anomalous transaction patterns

Across all of these use cases, XAI serves as the critical bridge between automated decision-making and human oversight.

Resim 4: Explainable Artificial Intelligence in Card Payment Systems

Case 1: The ‘Why?’ Question in Fraud Detection

Consider a scenario in which a transaction made with a customer’s card is immediately flagged as suspicious and blocked by the system. From a technical standpoint, the model has successfully fulfilled its function. However, the critical question that arises in practice is:

“Why was this transaction classified as fraudulent?”

In a scenario where XAI is implemented, the system is capable of generating explanations such as the following:

- The transaction amount is abnormally high relative to the customer’s historical spending patterns

- The transaction originated from an atypical geographic location

- Multiple similar attempts were detected within a short time interval

These explanations enable:

- Risk teams to make faster and more accurate decisions

- Customer service representatives to provide clear and substantiated responses to customers

- A reduction in false positive rates

Consequently, both operational efficiency is enhanced and the trust relationship established with the customer is preserved.

Case 2: Risk Scoring and Credit Card Limit Decisions

Credit limit decisions are sensitive not only from a financial risk perspective, but also in terms of customer experience. XAI provides two critical benefits in this context:

- Internal transparency

Risk teams are able to observe which variables the model considered and to what extent.

- External explainability

Decision-making processes become traceable for regulatory authorities or audit teams.

Methods widely employed in the literature — most notably SHAP and LIME — support this process by quantitatively and visually elucidating the factors underlying individual decisions.

What Does the Academic Literature Indicate?

The academic literature unequivocally demonstrates that XAI is not merely a desirable feature in financial systems, but an essential capability. Systematic reviews indicate that explainable models:

- enhance reliability

- facilitate earlier detection of model errors

- streamline regulatory compliance processes (Černevičienė ve Kabašinskas, 2024). Similarly, studies on financial time series and risk forecasting underscore that XAI integration strengthens human–machine collaboration (Arsenault vd., 2018).

The Regulatory Perspective: ‘If You Cannot Explain It, You Cannot Use It’

Numerous regulatory frameworks, most prominently that of the European Union, mandate that decisions supported by artificial intelligence be justifiable. This imperative elevates XAI beyond the agenda of technical teams alone, placing it firmly within the purview of legal, compliance, and business units as well.

In short: a decision that cannot be explained is not sustainable.

Conclusion: XAI is Not a Technology — It is a Paradigm

The success of artificial intelligence in card payment systems is no longer measured solely by accuracy rates. The defining factor is whether these decisions are comprehensible, contestable, and defensible by human stakeholders. In this regard, XAI is not an add-on feature; it is a foundational component of modern financial architecture oriented around trust, transparency, and sustainability. Just as agility has evolved over time, artificial intelligence is now compelled to be not merely more powerful, but more intelligible.

Kaynakça

- Suriya, S., & Sireesha, R. M. (2025). Credit Card Fraud Detection using Explainable AI Methods. Journal of Information Systems Engineering and Management, 10(24s).

- Almalki, F., & Masud, M. (2025). Financial Fraud Detection Using Explainable AI and Stacking Ensemble Methods. arXiv:2505.10050.

- Unmasking Banking Fraud: Unleashing the Power of Machine Learning and Explainable AI (XAI) on Imbalanced Data. Information 2024, 15(6), 298.

- Černevičienė, J., & Kabašinskas, A. (2024). Explainable artificial intelligence (XAI) in finance: a systematic literature review. Artificial Intelligence Review, 57, 216.

- Lundberg, S. M., & Lee, S.-I. (2017). A Unified Approach to Interpreting Model Predictions.

- Ribeiro, M. T., Singh, S., & Guestrin, C. (2016). “Why Should I Trust You?” Explaining the Predictions of Any Classifier.

- Zafar, U., & Fan, W. (2025). Methodological Challenges in Explainable AI for Fraud Detection: A Systematic Literature Review.

- Jain, A., Kulkarni, R., & Lin, S. (2025). Explainable AI in Big Data Fraud Detection. arXiv:2512.16037.

- Explainable AI Models in Financial Risk Prediction: Bridging Accuracy and Interpretability in Modern Finance (2023).

- Explainable AI (XAI) Analysis Using SHAP for Credit Card Fraud (2025).

- Arsenault, P.D., Wang, S., & Patenande, J.M. (2018). A Survey of Explainable arXiv Preprint. http://arxiv.org/abs/2407.15909

- Figure 1: https://tr.linkedin.com/posts/nilg%C3%BCn-%C5%9Feng%C3%B6z-phd-12203387_a%C3%A7%C4%B1klanabilir-yapay-zeka-modeli-insan-kullan%C4%B1c%C4%B1lar%C4%B1n-activity-6972974767620411392-YovV

- Figure 2: Google Gemini

- Figure 3: https://ayyucekizrak.medium.com/a%C3%A7%C4%B1klanabilir-yapay-zeka-nedir-ve-i%CC%87htiya%C3%A7-m%C4%B1d%C4%B1r-65adef9b086

- Figure 4: Google Gemini

Back

Back