Smartphones are no longer just devices for running applications. They have evolved into small assistants that correct what we write, suggest replies, analyze our habits, and often do all of this quietly in the background. Moreover, some of these experiences now take place directly on the device, without requiring an internet connection.

At the center of this transformation in the Android ecosystem is the on-device AI approach, which aims to run artificial intelligence directly on the device instead of relying on the cloud, along with Google’s model designed for this purpose: Gemini Nano.

What Is On-Device AI?

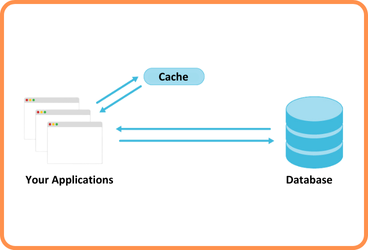

On-device AI is an approach in which artificial intelligence models run directly on the user’s device rather than on cloud servers. Input data, model execution, and output generation all take place on the device, meaning that data does not leave the application.

This approach enables applications to function without an internet connection, reduces latency, and improves privacy since user data remains on the device. At the same time, infrastructure costs are lowered because each operation does not require a request to a remote server.

In the Android ecosystem, the core of this architecture is Gemini Nano, an on-device generative AI model developed by Google and tightly integrated into the Android system architecture.

What Is Gemini Nano?

Gemini Nano is a lightweight and efficient generative AI model optimized specifically for Android devices. Unlike large, general-purpose cloud models, it is designed for short text and relatively simple tasks, allowing it to deliver fast, low-latency experiences directly on the device.

Gemini Nano runs entirely on the device and does not require an internet connection. It is effective in scenarios such as text summarization, spelling and grammar correction, text rewriting, generating smart replies in chat interfaces, and basic text classification. These capabilities make it possible to design smooth and responsive user experiences, especially in interaction-heavy screens.

An Android application can use Gemini Nano to correct user-written text, summarize long content, or provide context-aware reply suggestions in chat interfaces. Since all of these operations happen on the device, they offer significant advantages in terms of both performance and privacy.

Starting with Android 14, Gemini Nano is managed through a system component called AICore. This setup frees developers from dealing with operational details such as downloading, distributing, or updating the model. Instead, developers access Gemini Nano’s capabilities through APIs provided by AICore, allowing on-device AI to be integrated into applications without adding architectural complexity.

What Can Be Done with the ML Kit GenAI API?

Developers access Gemini Nano’s capabilities through the ML Kit GenAI API. This API layer makes on-device AI usage practical and controlled on the application side by abstracting away details such as model management.

With ML Kit GenAI, scenarios such as summarizing long texts, correcting spelling and grammar mistakes, rewriting text in different tones, and generating smart reply suggestions in chat interfaces can all be performed directly on the device. It is also possible to classify content such as profanity, sensitive data, or offensive language.

All of these operations are carried out without requiring the data to leave the device.

AICore: Android’s New AI Engine

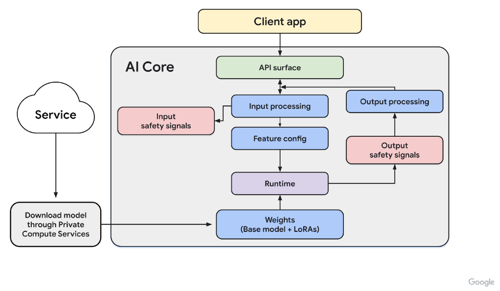

AICore is a system service responsible for running AI models on Android devices. It sits between applications and models, enabling on-device AI models such as Gemini Nano to operate in a secure and isolated environment. When and how a model is executed, as well as how CPU and memory resources are used, is controlled directly by the system.

AICore operates in line with Android’s Private Compute approach. This design aims to process user data in an isolated environment without allowing it to leave the device. Within this model, inputs provided to the AI and the outputs it generates are not stored persistently.

AICore does not have direct access to the internet. Model downloads and updates are handled through separate, system-managed, and secure services. This limits the privileges of AI components and allows both user data and device resources to be managed in a more controlled way. Such an architecture helps ensure that AI is used in a more predictable and auditable manner, particularly in applications where security and legal compliance are critical.

Supported Devices

Because Gemini Nano requires a certain level of hardware performance, it is currently available primarily on high-end Android devices. The model is actively supported on devices such as the Pixel 8 Pro, Pixel 9, and Pixel 9 Pro, where it is optimized for Google’s Tensor G3 and Tensor G4 processors.

Google aims to gradually expand on-device AI capabilities to more devices over time. In this direction, support for Gemini Nano is expected to be rolled out incrementally on flagship devices from manufacturers such as Samsung and Xiaomi.

On-Device AI or Cloud AI?

On-device AI and cloud-based AI solutions offer different advantages depending on the problem being addressed. On-device AI stands out in scenarios that require low latency, offline operation, and strong privacy guarantees. Cloud AI, on the other hand, is better suited for complex analyses and workloads that demand significant computational power.

Within the Android ecosystem, on-device models like Gemini Nano are typically used for features that are close to the user interface and require fast responses. In contrast, scenarios such as long document analysis, complex question-answering systems, or operations involving large datasets are still commonly handled in the cloud. In practice, these two approaches are best viewed not as alternatives, but as complementary solutions designed for different needs.

Use Cases of Gemini Nano in Banking Applications

Banking applications are one of the most natural use cases for ondevice AI. The high expectation of privacy and the need to provide fast, contextaware experiences make this approach especially valuable. However, there is a fundamental principle in these scenarios: Gemini Nano is not the decision-maker—it is a component that informs and supports the user.

For example, long contracts and campaign texts can be summarized on the device with Gemini Nano. The user is presented with a few simple, clear bullet points, and the entire process happens without an internet connection. The original text remains valid, while the AI simply plays a supportive role by making the content more readable.

In in-app support screens, user-written messages can be analyzed on-device to generate contextually relevant response suggestions. These suggestions are not sent automatically; they are reviewed and used by the user or the support agent. This both increases operational efficiency and preserves human oversight.

In spending summaries and “mini financial coach” scenarios, monthly data can be interpreted in a concise and easy-to-understand way by Gemini Nano. Numerical calculations remain on the backend, while the explanation presented to the user is shaped on the device. This provides a personalized experience without the need to send additional data to the server.

Additionally, when users enter free text, sensitive information such as profanity, credit card numbers, or identity details can be detected and filtered in advance on the device. This ensures that the data is fully controlled without ever leaving the device.

Security and Risks in On-Device AI

Keeping data on the device provides a significant privacy advantage compared to cloud-based solutions. However, this does not mean that security risks disappear entirely; rather, those risks shift from the cloud to the device. For this reason, on-device AI should not be treated as inherently secure, but as an architectural layer that requires careful design and regular review.

On Android, this approach is supported by AICore’s isolated execution model. AICore runs AI workloads in a separate environment and prevents inputs and outputs from being stored persistently. This provides a solid security foundation. Nevertheless, the fact that AICore does not directly access the internet does not mean that all AI components on the device are fully protected from attacks. In domains such as banking, where security and legal compliance are critical, this assumption can be misleading. This mindset often reflects a “secure because it is hidden” assumption, which on its own is insufficient.

Key Security Risks

Data Poisoning and Backdoor Attacks

If malicious samples are introduced into the training, tuning, or update processes of on-device models (such as system-provided models like Gemini Nano), model outputs can be altered without obvious signs. Even a small number of corrupted data points may cause the model to behave unexpectedly under certain conditions.

Recent reports of security issues related to Gemini models have brought this topic back into focus. In particular, so-called zero-click vulnerabilities describe attack scenarios that can be triggered without any user interaction. In banking contexts, this could lead to incorrect or misleading spending summaries, explanatory texts, or user-facing guidance.

Model Inversion Attacks

Model inversion attacks aim to infer information about training data or sensitive user inputs based on the model’s outputs. In other words, even if a model does not directly reveal sensitive data, its responses may leak indirect clues. This risk becomes more pronounced in interactive features such as keyboard prediction, smart reply suggestions, or text completion. For such scenarios, both the inputs provided to the model and the outputs presented to users must be carefully designed.

Model Update Risks

Although AICore itself does not directly access the internet, models are downloaded and updated through controlled services. A weakness in this distribution process could allow a model’s behavior to change without detection or enable a malicious update to reach devices. For this reason, updates to on-device AI models must be handled more cautiously than typical application updates. Model files should be treated as assets that are just as critical as application code.

Black-Box Nature and Explainability

On-device AI models often operate as black boxes, meaning it is not always possible to clearly explain why or how a particular output was produced. When errors or unexpected results occur, identifying the root cause can be challenging. In regulated domains such as banking, this lack of explainability introduces additional risk. Being unable to clearly justify how a piece of text or a recommendation was generated can complicate both technical investigations and compliance processes.

In short, on-device AI is not a complete security solution on its own. Effective use requires limiting the amount of data being processed, maintaining human oversight for certain AI-generated outputs, and clearly informing users where and how AI is used. Financially sensitive data typically remains on the backend, while on-device AI serves as a supporting component that helps present information to users in a clearer and more understandable way.

Conclusion

In the Android ecosystem, on-device AI has moved beyond being merely a feature that offers speed or offline capability. By keeping data on the device, this approach enables AI to be used in a way that is closer to the user and more tightly controlled. With Gemini Nano and AICore, developers can design smoother experiences in many scenarios without relying heavily on the cloud.

That said, running AI on the device does not automatically guarantee security. Especially in areas such as banking, where data privacy and legal obligations are critical, this approach must be handled with care. While AICore’s isolation model and logging restrictions provide a strong foundation, risks related to model updates and certain attack scenarios cannot be fully eliminated.

When positioned correctly, Gemini Nano can significantly improve user experience in areas such as text summarization, smart reply suggestions, and pre-filtering sensitive content. Conversely, if security considerations are neglected, privacy issues and compliance challenges may arise.

Ultimately, on-device AI is not a magical solution that solves every problem on its own. However, when used within well-defined boundaries, supported by appropriate security measures, and applied thoughtfully, it becomes a powerful engineering tool. When this balance is achieved, solutions like Gemini Nano can form a natural part of reliable and sustainable systems.

Resources

- AI on Android : https://developer.android.com/ai

- Gemini Nano Overview: https://developer.android.com/ai/gemini-nano

- ML Kit GenAI – On-device Gemini Nano: https://developer.android.com/ai/gemini-nano/ml-kit-genai

- A New Foundation for AI on Android – Android Developers Blog: https://android-developers.googleblog.com/2023/12/a-new-foundation-for-ai-on-android.html

- Introduction to Privacy and Safety for Gemini Nano: https://android-developers.googleblog.com/2024/10/introduction-to-privacy-and-safety-gemini-nano.html

- On-device AI: The emergence of localised intelligence: https://www.verdict.co.uk/on-device-ai-localised-intelligence-growth/

- GeminiJack – Google Gemini Zero-Click Vulnerability: https://noma.security/blog/geminijack-google-gemini-zero-click-vulnerability/

- Model Inversion Attacks – Witness AI Blog: https://witness.ai/blog/model-inversion-attacks/

Back

Back